The following publication has been lightly reedited for spelling, grammar, and style to provide better searchability and an improved reading experience. No substantive changes impacting the data, analysis, or conclusions have been made. A PDF of the originally published version is available here.

Traditionally, government regulation in the United States follows a straightforward command approach. The government states the desired activities of the regulated firm, the allowable actions, and the prohibited actions. The firm is punished if it violates these strictures. Regulatory economists have identified many problems with this approach. First, there is the problem of asymmetry in information: Regulations cannot be enforced if they require information from the firm that the regulator cannot elicit reliably and at reasonable cost.

Second, there is the problem of asymmetry in expertise: Regulated firms have far more expertise in their industry than regulators. The firm’s management may know how to achieve regulators’ goals at a lower cost than would be permitted by the regulations. Unfortunately, the command approach rarely gives management the flexibility to carry out these cheaper approaches.

A third problem is that of implementation: Complex regulations are difficult to implement. As a practical matter, command regulations must be simpler than the activity they regulate. The result is “one-size-fits-all” rules, which are either overly restrictive and heavy-handed or not strong enough to achieve public policy goals. Finally, the command approach is often subject to the law of unintended consequences: The command regulations may induce unintended perverse behavior by the regulated firm.

In response to the flaws in command regulation, a new approach to regulation has evolved. “Incentive-compatible” regulation seeks to align the incentives of regulated firms with regulatory goals. Appropriately designed regulatory structures cause the firm to internalize regulatory objectives. With their superior access to information and expertise, firms can further the public interest more effectively and more cheaply than under the command approach. Incentive-compatible regulation is generally preferred by regulated firms, since it cedes them a degree of autonomy not permitted in the command approach. The firm can choose the least burdensome way of meeting the regulator’s goals. Competition ensures that firms achieve social goals at minimum cost.

As an example of incentive-compatible regulation, this Fed Letter describes the evolution in approaches for determining a bank’s regulatory capital for market risk. By “market risk,” we mean the risk of losses to the bank from price movements in financial markets. Over the past 15 years, trading in financial markets has become a significant activity for large commercial banks (those with total assets in excess of $100 billion). In 1979, only 1.3% of these banks’ assets were actively traded financial instruments. By 1994, this had grown to 13.2%.1 Bank capital, and, ultimately, the Bank Insurance Fund, is at risk if the value of this trading book falls rapidly due to a precipitous change in financial market prices. Bank observers have suggested that the risk to banks may be exacerbated by the increased use of derivatives.2 While derivative securities can be a risk-reducing tool, they also offer an inexpensive way to construct a highly leveraged asset position, which is particularly vulnerable to rapid movements in financial markets. For example, the collapse of Barings Bank last year was precipitated by a high-stakes bet on the direction of Japanese stock prices, using financial derivatives traded on exchanges in Singapore and Osaka.

Proposed capital standards for market risk

A number of approaches have been proposed for setting regulatory capital levels against market risk. One of the first was proposed in April 1993 by the Basel Committee on Banking Supervision. Known as the standardized approach, this proposal followed the conventional philosophy of command regulation. It divides trading book assets into different risk classes and assesses a fixed capital charge against each class.

The standardized approach suffers from asymmetry both in information and in risk-assessment expertise between banks and regulators. Capital is based on the bank’s current asset position, and thus fails to account for dynamic trading strategies that can be adopted by the bank for risk control. For example, a bank may have contingency plans, based on proprietary risk-management models, to move in and out of certain markets as risk levels change. This ability is not reflected in the regulatory process. Furthermore, the risk-assessment models used by large commercial banks are far superior to the crude risk classes of the standardized approach. The standardized approach cannot take advantage of the banks’ detailed knowledge of asset and financial markets. Even if regulators possessed this expertise, the cost of administering capital requirements at this level of detail would be prohibitive. This necessitates an overly simple (critics would say “crude”) set of regulations. Finally, the standardized approach provides perverse incentives, potentially leading to unintended consequences. For example, because all equity holdings are assessed the same capital charge, banks may be inclined to increase their holdings in riskier stocks. This outcome would go directly against regulatory goals and would probably be suboptimal for the banks themselves.

In response to this criticism, the Basel Committee issued a revised proposal known as the “internal-models” approach in April 1995. A version of this proposal was subsequently approved by the governors of the central banks of the G-10 countries.3 In an important development in the history of regulatory practice, the approach allows banks to use their own internal risk-assessment models to determine the risk of their financial asset portfolio. Each bank would compute the maximum loss it might sustain over the next ten days with 99% confidence (the so-called value at risk), and regulators would base their capital charge as a multiple of this number. (The multiple would be at least three. The regulators would have the discretion to choose a higher multiplier if a comparison between the estimates of the internal models and actual performance suggested deficiencies in the internal models.4)

The internal-models approach addresses the problem of expertise asymmetry, since it uses the bank’s more sophisticated risk-assessment technology to determine the bank’s market risk. However, it also imposes restrictive quantitative standards on the models being used by banks. Therefore, it still has many of the problems associated with command regulation. It may induce banks to tailor their models toward the regulatory process rather than toward greater accuracy, which would also stifle technical innovation in risk-management models. An unintended consequence could be that banks develop two sets of models. One, to be used internally for risk assessment, would not follow the conditions imposed by the regulator, but would incorporate the latest technical developments in the field. The other, to be used solely for determining regulatory capital, would follow the regulator’s conditions, but would be constructed to minimize regulatory capital. This would undo much of the expected gain from basing capital requirements on the banks’ more accurate risk-assessment models.

Furthermore, there is the problem of asymmetry of information between the banks and the regulators: There is essentially no way for the regulator to verify that the value-at-risk number given to the regulator corresponds to the risk assessment used by the bank’s management. Finally, the internal-models approach focuses on static risk measurement. It ignores the possibility of dynamic risk management and the complex linkages between risk assessment and risk management.

An incentive-compatible approach

Due to the weaknesses of the standardized approach and the internal-models approach, many economists suggest scrapping the command approach entirely in favor of incentive-compatible regulation. In particular, Paul Kupiec, James O’Brien, and other staff members at the Federal Reserve Board have proposed a “precommitment approach” to bank capital regulation.5 This approach is very simple. Each bank states the maximum loss that its trading book will sustain over the next period. The capital charge for market risk equals this precommitted maximum loss. If the bank’s losses exceed the precommitted level, a penalty is imposed.

Under the precommitment approach, the bank is free to choose both the amount of market risk and the amount of capital to hold against this risk. The penalty can be structured so that banks voluntarily choose a risk level for their portfolios and a level of regulatory capital that meets the regulator’s objectives, without any direct regulatory interference. The regulator’s only role in this approach is to verify that the bank maintains an adequate risk-management structure and to review the bank’s profit-and-loss information.

Why might the precommitment approach work better than the command approaches? First, it economizes on costly capital. Banks can choose whether to control risk via higher capital set-asides, more sophisticated dynamic hedging strategies, or a reduction in portfolio risk. It is in their own interest to choose the least expensive method of risk control.

A second benefit of precommitment is that it encourages the development of improved risk-management technologies. Banks have a clear incentive to use the most sophisticated methods for assessing portfolio risk. If their risk assessment is too conservative, they set aside too much capital; if the assessment is insufficiently conservative, they will violate the precommitted loss levels too often and bear higher-than-expected penalties. Either way, inaccurate risk assessment is costly, so banks will invest in procedures that increase the accuracy of their models.

The precommitment approach also has advantages for the banking industry. Precommitment is less burdensome and intrusive than the command approaches.6 Banks can run their affairs as they like, provided their capital levels (in their own assessment) are commensurate with their risk levels. The regulator steps in only if the capital level, ex post, proves insufficient. Unlike the internal-models approach, the precommitment approach does not mandate a fixed “multiplier” on a bank’s value at risk but allows the bank to commit a level of capital that optimally trades off the cost of capital against the probability of a regulatory penalty. Moreover, the process does not impose quantitative restrictions on banks’ risk-assessment models. The bank has almost unlimited flexibility in developing its risk-assessment and risk-management approaches. Of course, it must then accept the consequences, including the possibility of regulatory penalties.

Finally, the precommitment approach also offers banks the advantage of a low reporting burden. The only data banks would have to report to regulators would be periodic profit-and-loss statements on their trading books. These data are already computed regularly by banks for risk-management purposes.

Issues to be addressed

The details of the precommitment approach are still being formulated. While generally supportive, banks have expressed several concerns about the way that precommitment is to be carried out. A critical issue is the design of the penalty. The simplest form would be a monetary fine. A potential problem with assessing a fine is that it “hits the bank when it is down”: The fine would be levied when the bank had recently sustained significant losses. While this is a common argument, many contracts have similar provisions. For example, debt contracts often specify an increase in the loan rate if the firm’s condition deteriorates. Alternatives to a monetary fine could include higher future capital requirements, restrictions on future trading, and public disclosure of the violation. Of course, these penalty structures are not mutually exclusive.

Another concern banks have expressed is that precommitment could have perverse incentives if implemented for poorly capitalized banks. If a poorly capitalized bank’s trading losses are approaching the critical level, it may be near bankruptcy. Such a bank will not be deterred by the threat of a penalty, since, literally, there will be no bank left to penalize. In such a case, the bank might be tempted to put its remaining assets in highly speculative investments, in the hope that a favorable roll of the dice will bail it out. The precommitment approach, as currently proposed, avoids this problem by limiting participation to well-capitalized banks. This would be consistent with the limitations imposed on undercapitalized institutions under the Federal Deposit Insurance Corporation Improvement Act.

Finally, banks have expressed concern that, as currently proposed, precommitment would not be imposed on nonbank financial institutions. This would seem to “unlevel” the industry’s playing field. It should be noted that banks have access to deposit insurance and other safety net facilities, so it is appropriate to hold them to a different standard than other (nonbanking) financial institutions. However, to the extent that nonbanks enjoy implicit government guarantees (the notion of “too big to fail”), perhaps capital regulation should be extended to these institutions as well.

Conclusion

The precommitment approach is a way of inducing banks to choose a level of capital and a risk-management strategy that achieve regulators’ social goals, without imposing heavy-handed regulations directly on bank activities. In particular, it incorporates the advantages of the internal-models approach without the intrusiveness and informational problems associated with that approach. It attempts to improve on the advantages of internal models by better aligning capital requirements with the trading account’s actual risk exposure over an extended interval. Precommitment is an attempt to apply the philosophy of incentive-compatible regulation to the financial services industry. As such, it provides a laboratory for working out implementation details for this regulatory philosophy, and it may suggest ways of incorporating this philosophy into other tasks in financial regulation.

Tracking Midwest manufacturing activity

Manufacturing output indexes (1987=100)

| January | Month ago | Year ago | |

| MMI | 143.0 | 143.4 | 142.9 |

| IP | 124.0 | 124.7 | 124.1 |

Motor vehicle production (millions, seasonally adj. annual rate)

| February | Month ago | Year ago | |

| Cars | 6.1 | 5.7 | 7.2 |

| Light trucks | 5.6 | 5.2 | 5.2 |

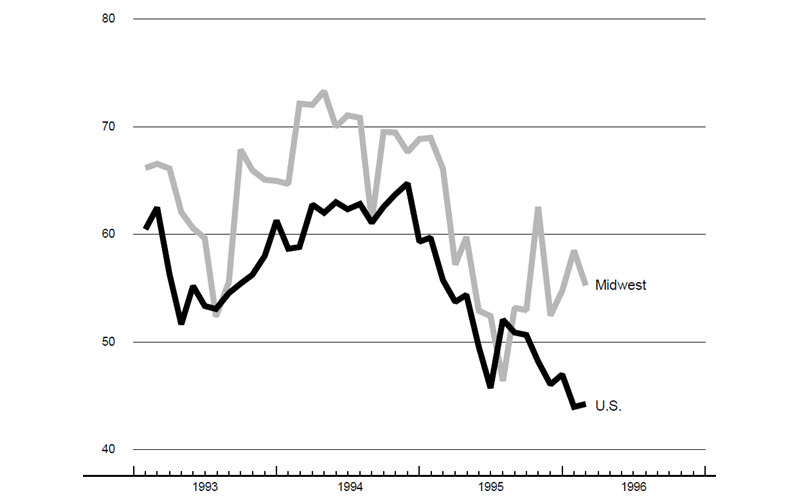

Purchasing managers’ surveys: net % reporting production growth

| February | Month ago | Year ago | |

| MW | 55.3 | 58.5 | 66.2 |

| U.S. | 44.3 | 44.0 | 55.8 |

Purchasing managers’ surveys (production index)

Sources: The Midwest Manufacturing Index (MMI) is a composite index of 15 industries, based on monthly hours worked and kilowatt hours. IP represents the Federal Reserve Board industrial production index for the U.S. manufacturing sector. Autos and light trucks are measured in annualized units, using seasonal adjustments developed by the Board. The purchasing managers’ survey data for the Midwest are weighted averages of the seasonally adjusted production components from the Chicago, Detroit, and Milwaukee Purchasing Managers’ Association surveys, with assistance from Bishop Associates, Comerica, and the University of Wisconsin–Milwaukee.

Midwest manufacturing activity was strong in February but began to show increasing signs of variability. The composite purchasing managers’ survey was well above the nation, indicating continued expansion in manufacturing. Among the three regional surveys that comprise the Midwest composite, the Detroit and Milwaukee surveys were expanding in January and February. In contrast, Chicago was down sharply, falling into line with the national survey after posting a strong gain in December. Both the MMI and the IP show a dip in production in January.

Light-vehicle production in January was disrupted by the East Coast blizzard. Units assembled dropped from 11.6 million (saar) in December to 10.8 million in January (reflected in the MMI and IP). A rebound is expected in February and March (barring further disruptions from the UAW strike in Dayton).

Notes

1 Data on banks’ trading book assets are from Alan Berger, Anil Kashyap, and Joseph Scalise, “The transformation of the U.S. banking industry,” Brookings Papers on Economic Activity, Vol. 2, 1995.

2 For example, consider the following statement by Charles A. Bowsher, Comptroller General of the United States, on May 19, 1994: “…the sudden failure or abrupt withdrawal from trading of any of these large U.S. [derivatives] dealers could cause liquidity problems in the markets and could also pose risks to the others, including federally insured banks and the financial system as a whole.” (Testimony before the Subcommittee on Telecommunications and Finance, Committee on Energy and Commerce, House of Representatives, May 19, 1994.)

3 “Final capital standards for market risk,” published by the Basel Committee for Banking Supervision, December 1995.

4 See, for example, the request for comments in “Risk-based capital standards: Market risk; internal models backtesting,” Federal Register, Vol. 61, No. 46, March 7, 1996.

5 Paul Kupiec and James O’Brien, “A precommitment approach to capital requirements for market risk,” Federal Reserve Board of Governors, working paper, June 1995.

6 “Fed’s trading set-aside plan draws mix of catcalls, kudos,” American Banker, November 8, 1995.